Table of contents

Introduction

The Inverno Framework has been created with the objective of facilitating the creation of Java enterprise applications with maximum modularity, performance, maintainability and customizability.

New technologies are emerging all the time questioning what has been working for years, We strongly believe that we must instead recognize and preserve proven solutions and only provide what is missing or change what is no longer in line with widely accepted evolutions. The Java platform has proven to be resilient to change and offers features that make it an ideal choice to create durable and efficient applications in complex technical and organizational environments which is precisely what is expected in an enterprise world. The Inverno Framework is a fully integrated suite of modules built for the Java platform that fully embrace this philosophy by keeping things well organized, strict and explicit with clean APIs and comprehensive documentation.

The Inverno framework is open source and licensed under version 2.0 of the Apache License.

Design principles

A Inverno application is inherently modular, modularity is a key design principle which guarantees a proper separation of concerns providing flexibility, maintainability, stability and ease of development regardless of the lifespan of an application or the number of people involved to develop it. A Inverno module is built as a standard Java module extending the Java module system with Inversion of Control and Dependency Injection performed at compile time.

The Inverno Framework extends the Java compiler to generate code at compile time when it makes sense to do so which is strictly why annotations were initially created for. When done appropriately, code generation can be extremely valuable: issues can be detected ahead of time by analyzing the code during compilation, runtime footprint can be reduced by transferring costly processing like IoC/DI to the compiler improving runtime performance at the same time.

The framework uses a state of the art threading model and it has been designed from the ground up to be fully non-blocking and reactive in order to deliver very high performance while simplifying development of highly distributed applications requiring back pressure management.

The inherent modularity of the framework based on the Java module system guarantees a nice and clean project structure which prevents misuse and abuse by clearly separating the concerns and exposing well designed APIs.

Special attention has been paid to configuration and customization which are often overlooked and yet vital to create applications that can adapt to any environment or context.

Getting help

We provide here a reference guide that starts by an overview of the Inverno core, modules and tools projects which gives a good idea of what can be done with the framework followed by a more comprehensive documentation that should guide you in the creation of an Inverno project using the Inverno distribution, the use of the core IoC/DI framework, the various modules including the configuration and the Web server modules and the tools to run, package and distribute Inverno components and applications.

The API documentation provides plenty of details on how to use the various APIs. The getting started guide is also a good starting point to get into it.

Feel free to report bugs and feature requests or simply ask questions using GitHub's issue tracking system if you ran in any issue or wish to see some new functionalities implemented in the framework.

Overview

Inverno Core

The Inverno core framework project provides an Inversion of Control and Dependency Injection framework for the Java™ platform. It has the particularity of not using reflection for object instantiation and dependency injection, everything being verified and done statically during compilation.

This approach has many advantages over other IoC/DI solutions starting with the static checking of the bean dependency graph at compile time which guarantees that a program is correct and will run properly. Debugging is also made easier since you can actually access the source code where beans are instantiated and wired together. Finally, the startup time of a program is greatly reduced since everything is known in advance, such program can even be further optimized with ahead of time compilation solutions like GraalVM...

The framework has been designed to build highly modular applications using standard Java modules. An Inverno module supports encapsulation, it only exposes the beans that need to be exposed, and it clearly specifies the dependencies it requires to operate properly. This greatly improves program stability over time and simplifies the use of a module. Since an Inverno module has a very small runtime footprint it can also be easily integrated in any application.

Creating an Inverno module

An Inverno module is a regular Java module, that requires io.inverno.core modules, and which is annotated with @Module annotation. The following hello module is a simple Inverno module:

@io.inverno.core.annotation.Module

module io.inverno.example.hello {

requires io.inverno.core;

}

An Inverno bean can be a regular Java class annotated with @Bean annotation. A bean represents the basic building block of an application which is typically composed of multiple interconnected beans instances. The following HelloService bean can be used to create a basic application:

package io.inverno.example.hello;

import io.inverno.core.annotation.Bean;

@Bean

public class HelloService {

public HelloService() {}

public void sayHello(String name) {

System.out.println("Hello " + name + "!!!");

}

}

At compile time, the Inverno framework will generate a module class named after the module, io.inverno.example.hello.Hello in our example. This class contains all the logic required to instantiate and wire the application beans at runtime. It can be used in a Java program to access and use the HelloService. This program can be in the same Java module or in any other Java module which requires module io.inverno.example.hello:

package io.inverno.example.hello;

import io.inverno.core.v1.Application;

public class Main {

public static void main(String[] args) {

Hello hello = Application.with(new Hello.Builder()).run();

hello.helloService().sayHello(args[0]);

}

}

Building and running with Maven

The development of an Inverno module is pretty easy using Apache Maven, you simply need to create a standard Java project that inherits from io.inverno.dist:inverno-parent project and declare a dependency to io.inverno:inverno-core:

<!-- pom.xml -->

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>io.inverno.dist</groupId>

<artifactId>inverno-parent</artifactId>

<version>1.13.0</version>

</parent>

<groupId>io.inverno.example</groupId>

<artifactId>hello</artifactId>

<version>1.0.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>io.inverno</groupId>

<artifactId>inverno-core</artifactId>

</dependency>

</dependencies>

</project>

Java source files for io.inverno.example.hello module must be placed in src/main/java directory, the module can then be built using Maven:

$ mvn install

You can then run the application:

$ mvn inverno:run -Dinverno.run.arguments=John

[INFO] --- inverno-maven-plugin:1.6.0:run (default-cli) @ app-hello ---

[INFO] Running project: io.inverno.example.hello@1.0.0-SNAPSHOT...

Hello John!!!

Building and running with pure Java

You can also choose to build your Inverno module using pure Java commands. Assuming Inverno framework modules are located under lib/ directory and Java source files for io.inverno.example.hello module are placed in src/io.inverno.example.hello directory, you can build the module with the javac command:

$ javac --processor-module-path lib/ --module-path lib/ --module-source-path src/ -d jmods/ --module io.inverno.example.hello

The application can then be run as follows:

$ java --module-path lib/:jmods/ --module io.inverno.example.hello/io.inverno.example.hello.Main John

Hello John!!!

Inverno Modules

The Inverno modules framework project provides a collection of components for building highly modular and powerful applications on top of the Inverno IoC/DI framework.

While being fully integrated, any of these modules can also be used individually in any application thanks to the high modularity and low footprint offered by the Inverno framework.

The objective is to provide a complete consistent set of high-end tools and components for the development of fast and maintainable applications.

Using a module

Modules can be used in an Inverno module by defining dependencies in the module descriptor. For instance, you can create a Web application module using the boot and web-server modules:

@io.inverno.core.annotation.Module

module io.inverno.example.webApp {

requires io.inverno.mod.boot;

requires io.inverno.mod.web.server;

}

A simple microservice application can then be created in a few lines of code as follows:

import io.inverno.core.annotation.Bean;

import io.inverno.core.v1.Application;

import io.inverno.mod.base.resource.MediaTypes;

import io.inverno.mod.web.server.annotation.WebController;

import io.inverno.mod.web.server.annotation.WebRoute;

@Bean

@WebController

public class MainController {

@WebRoute( path = "/message", produces = MediaTypes.TEXT_PLAIN)

public String getMessage() {

return "Hello, world!";

}

public static void main(String[] args) {

Application.with(new WebApp.Builder()).run();

}

}

Please refer to Inverno distribution for detailed setup and installation instructions.

Comprehensive reference documentations are available for Inverno core and Inverno modules.

Several example projects showing various features are also available in the Inverno example project. They can also be used as templates to start new Inverno application or component projects.

Feel free to report bugs and feature requests in GitHub's issue tracking system if you ran in any issue or wish to see some new functionalities implemented in the framework.

List of modules

The framework currently provides the following modules.

io.inverno.mod.base

The Inverno base module defines foundational APIs of the Inverno framework modules:

- Conversion API used to convert objects from/to other objects

- Concurrent API defining the reactive threading model API

- Net API providing URI manipulation as well as low level network client and server utilities

- Reflect API for manipulating parameterized type at runtime

- Resource API to read/write any kind of resources (e.g. file, zip, jar, classpath, module...)

io.inverno.mod.boot

The Inverno boot module provides base services to an application:

- the reactor which defines the reactive threading model of an application

- a net service used for the implementation of optimized network clients and servers

- a media type service used to determine resource media types

- a resource service used to access resources based on URIs

- a basic set of converters to decode/encode JSON, parameters (string to primitives or common types), media types (text/plain, application/json, application/x-ndjson...)

- a worker thread pool used to execute tasks asynchronously

- a JSON reader/writer

io.inverno.mod.configuration

The Inverno configuration module defines an application configuration API providing great customization and configuration features to multiple parts of an application (e.g. system configuration, multitenant configuration, user preferences...).

This module also introduces the .cprops configuration file format which facilitates the definition of complex parameterized configuration.

In addition, it also provides implementations for multiple configuration sources:

- a command line configuration source used to load configuration from command line arguments

- a map configuration source used to load configuration stored in map in memory

- a system environment configuration source used to load configuration from environment variables

- a system properties configuration source used to load configuration from system properties

- a

.propertiesfile configuration source used to load configuration stored in a.propertiesfile - a

.cpropsfile configuration source used to load configuration stored in a.cpropsfile - a Redis configuration source used to load/store configuration from/to a Redis data store with supports for configuration versioning

- a composite configuration source used to combine multiple sources with support for smart defaulting

- an application configuration source used to load the system configuration of an application from a set of common configuration sources in a specific order, for instance: command line, system properties, system environment, local

configuration.cpropsfile andconfiguration.cpropsfile resource in the application module

Configurations are defined as simple interfaces in a module which are processed by the Inverno compiler to generate configuration loaders and beans to make them available in an application with no further effort.

io.inverno.mod.discovery

The Inverno discovery module defines the foundational API for service discovery. At its center, the discovery service is used to resolve specific network services from URI identifiers. Requests are submitted to these services whose role is to assign them to the right service instances based on routing rules and/or load balancing strategies.

The module provides supporting classes to ease the development of discovery service implementation, it especially provides:

- base DNS discovery service implementation for resolving services from a hostname using DNS lookup

- base configuration discovery service implementation for resolving services from a specific service descriptor stored in a configuration source

- caching discovery service wrapper for automatically caching and refreshing resolved services

- composite discovery service which combines multiple discovery services into one

- base traffic load balancer implementations for random and round-robin strategies with support for weighted or non-weighted service instances

io.inverno.mod.discovery.http

The Inverno discovery HTTP module specializes the Discovery API for HTTP service resolution by defining a specific HTTP traffic policy and shipping extra least requests and minimum load factor load balancing strategies. It also provides a DNS HTTP discovery service bean for resolving HTTP services through DNS lookup.

The module can be used jointly with the Web client module in order to resolve standard HTTP URIs (i.e. http://, https://, ws:// or wss://).

io.inverno.mod.discovery.http.k8s

The Inverno discovery HTTP Kubernetes module provides discovery service beans resolving HTTP services deployed in a Kubernetes cluster.

It currently exposes an environment based discovery service implementation which resolves service inet socket address (i.e. host and port) from the environment variables defined by Kubernetes for each service in the cluster pods (i.e. <SERVICE_NAME>_SERVICE_HOST and <SERVICE_NAME>_SERVICE_PORT_HTTP).

The module can be used jointly with the Web client module in order to resolve HTTP services from k8s-env://<SERVICE_NAME> URIs in a Kubernetes cluster.

io.inverno.mod.discovery.http.meta

The Inverno discovery HTTP meta module specifies the HTTP meta service which, as its name suggest, allows to combine several other HTTP services into one by routing requests whose content (path, method, content type, headers, query parameters...) matches specific rules to the corresponding service. It also supports request rewriting, including path, request/response headers and query parameters, as well as cross-service load balancing (i.e. load balance requests among multiple services).

The module exposes a configuration based HTTP meta discovery service which resolves HTTP meta service descriptors from a configuration source.

The module can be used jointly with the Web client module in order to resolve HTTP services from conf://<SERVICE_NAME> URIs.

io.inverno.mod.grpc.base

The Inverno gRPC base module provides the foundational API as well as common services for developing gRPC clients and servers, such as message compressors (e.g. gzip, deflate, snappy...).

io.inverno.mod.grpc.client

The Inverno gRPC client module provides a service to convert HTTP client exchange into gRPC client exchanges supporting the gRPC protocol over HTTP/2.

It supports the following features:

- unary, client streaming, server streaming and bidirectional streaming service methods as defined in [gRPC core concepts][]

- metadata encoding and decoding

- RPC cancellation

- message compression (

gzip,deflate,snappy)

Inverno tools provide a gRPC Protocol buffer compiler plugin for generating client stubs for each service definition.

io.inverno.mod.grpc.server

The Inverno gRPC server module provides a service to convert gRPC server exchange handlers supporting the gRPC protocol over HTTP/2 into HTTP server exchange handlers that can be injected in the HTTP server controller or Web routes to expose gRPC endpoints.

It supports the following features:

- unary, client streaming, server streaming and bidirectional streaming service methods as defined in [gRPC core concepts][]

- metadata encoding and decoding

- RPC cancellation

- message compression (

gzip,deflate,snappy)

Inverno tools provide a gRPC Protocol buffer compiler plugin for generating Web routes configurers for each service definition.

io.inverno.mod.http.base

The Inverno HTTP base module provides the foundational API as well as common services for HTTP client and server development, such as an extensible HTTP header service used to decode and encode HTTP headers.

It also provides a generic router API used to create routers to best match an input to a resource based on some set of criteria. This API is especially used in the Web server module for routing Web exchange to Web exchange handlers or in the Web client module for resolving interceptors.

io.inverno.mod.http.client

The Inverno HTTP client module provides a fully reactive HTTP/1.x and HTTP/2 client implementation based on Netty.

It supports the following features:

- SSL

- HTTP compression/decompression

- HTTP/2 over cleartext upgrade

- URL encoded form data

- Multipart form data

- WebSocket

io.inverno.mod.http.server

The Inverno HTTP server module provides a fully reactive HTTP/1.x and HTTP/2 server implementation based on Netty.

It supports the following features:

- SSL

- HTTP compression/decompression

- Server-sent events

- HTTP/2 over cleartext upgrade

- URL encoded form data

- Multipart form data

- WebSocket

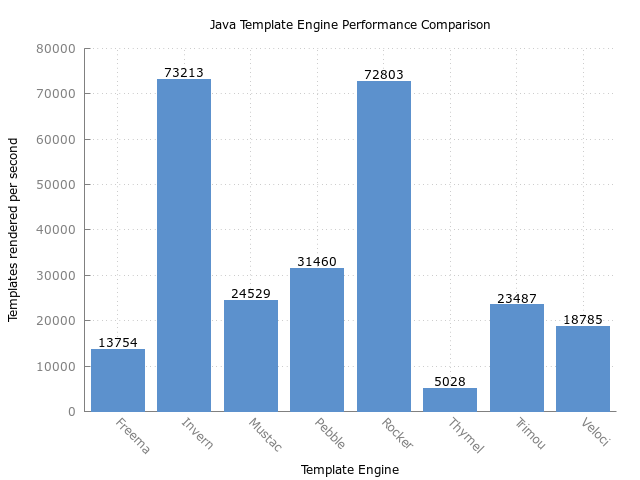

io.inverno.mod.irt

The Inverno Reactive Template module provides a reactive template engine including:

- reactive, near zero-copy rendering

- statically types template generated by the Inverno compiler at compile time

- pipes for data transformation

- functional syntax inspired from XSLT and Erlang on top of the Java language that perfectly embraces reactive principles

io.inverno.mod.ldap

The Inverno LDAP module specifies a reactive API for querying LDAP servers. It also includes a basic LDAP client implementation based on the JDK. It supports bind and search operations.

io.inverno.mod.redis

The Inverno Redis client module specifies a reactive API for executing Redis commands on a Redis data store. It supports:

- batch queries

- transaction

io.inverno.mod.redis.lettuce

The Inverno Redis client Lettuce implementation module provides Redis implementation on top of Lettuce async pool.

It also provides a Redis Client bean backed by a Lettuce client and created using the module's configuration. It can be used as is to send commands to a Redis data store.

io.inverno.mod.security

The Inverno Security module specifies an API for authenticating request to an application and controlling the access to protected services or resources. It provides:

- User/password authentication against a user repository (in-memory, Redis...).

- Token based authentication.

- Session-based authentication.

- Strong user identification against a user repository (in-memory, Redis...).

- Secured password encoding using message digest, Argon2, Password-Based Key Derivation Function (PBKDF2), BCrypt, SCrypt...

- Role-based access control.

- Permission-based access control.

io.inverno.mod.security.http

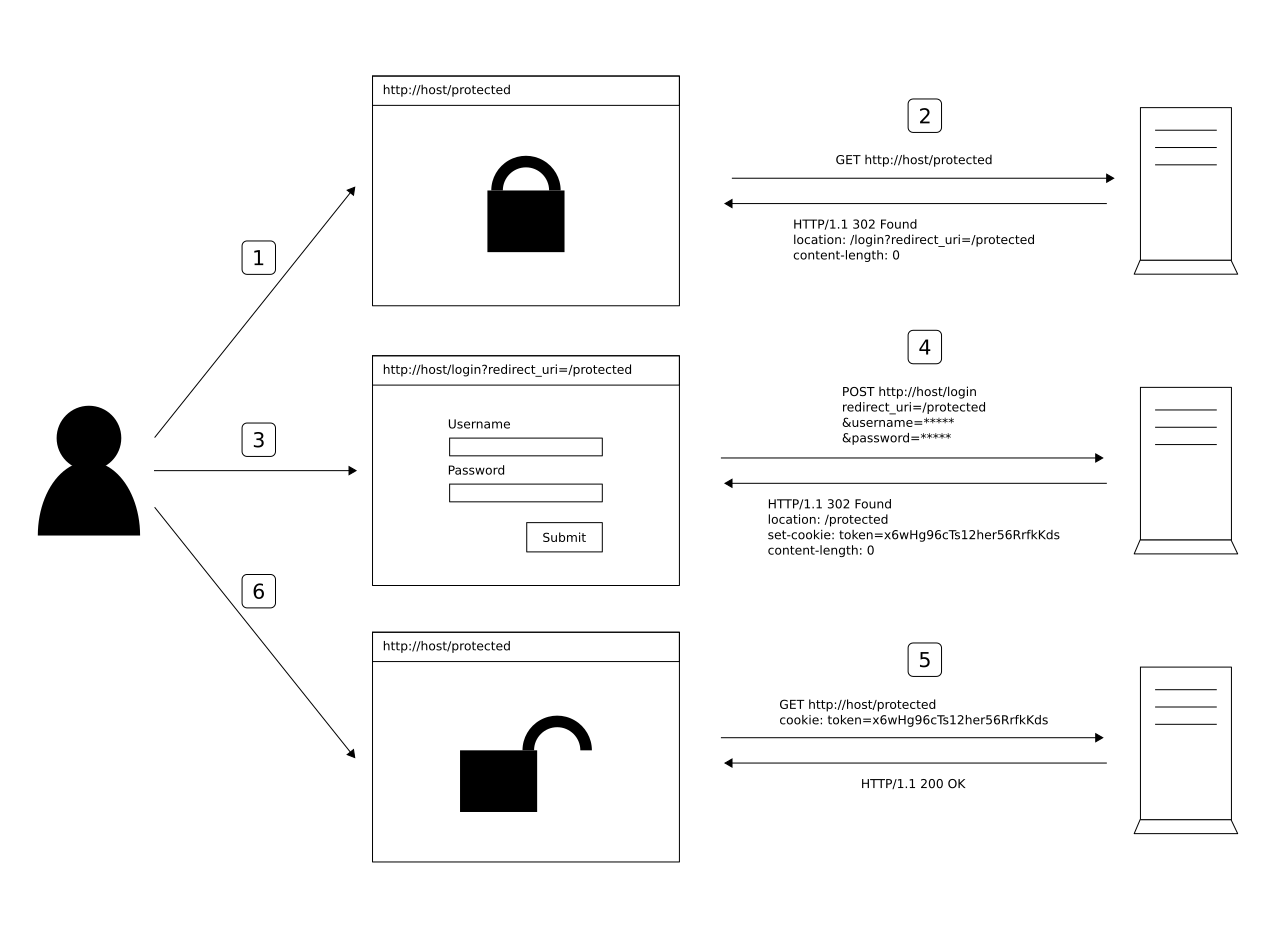

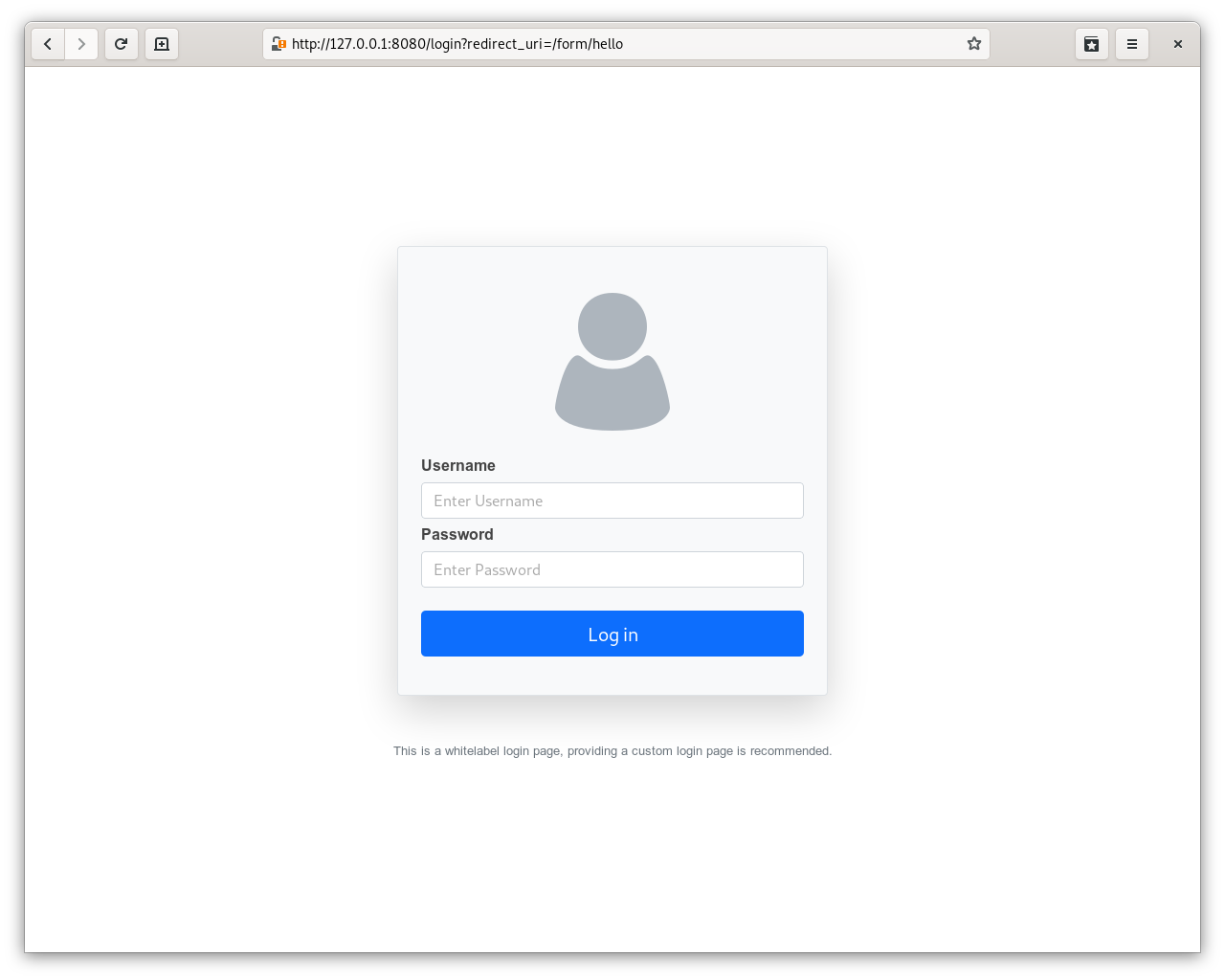

The Inverno Security HTTP module is an extension to the Inverno Security module that provides a specific API and base implementations for securing applications accessed via HTTP. It provides supports for:

- HTTP basic authentication scheme.

- HTTP digest authentication scheme.

- Form based authentication.

- Cross-origin resource sharing support CORS.

- Protection against Cross-site request forgery attack CSRF.

io.inverno.mod.security.ldap

The Inverno Security LDAP module is an extension to the Inverno Security module that provides support for authentication and identification against LDAP and Active Directory servers.

io.inverno.mod.security.jose

The Inverno Security JOSE module is a complete implementation of JSON Object Signing and Encryption RFCs. It provides:

- a JWK service used to manipulate JSON Web Key as specified by RFC 7517 and RFC 7518.

- a JWS service used to create and validate JWS tokens as specified by RFC 7515.

- a JWE service used to create and decrypt JWE tokens as specified by RFC 7516.

- a JWT service used to create, validate or decrypt JSON Web Tokens as JWS or JWE as specified by RFC 7519.

- JWS and JWE compact and JSON representations support.

- JSON Web Key Thumbprint support as specified by RFC 7638.

- support for JWS Unencoded Payload Option as specified by RFC 7797.

- CFRG Elliptic Curve Diffie-Hellman (ECDH) and Signatures support as specified by RFC 8037.

- CBOR Object Signing and Encryption (COSE) as specified by RFC 8812.

io.inverno.mod.session

The Inverno Session module specifies an API for managing session that persist across more than one request to an application. It provides:

- Basic session support with data stored on the application side.

- JWT session support allowing a hybrid approach where stateless data can be stored in a JWT used as session identifier on the client side along with basic session data on the application side.

- In-memory and Redis session store implementations.

io.inverno.mod.session.http

The Inverno Session HTTP module is an extension to the Inverno Session module that provides specific API and components to support session in a Web application.

io.inverno.mod.sql

The Inverno SQL client module specifies a reactive API for executing SQL statements on a RDBMS. It supports:

- prepared statement

- batch execution

- transaction

io.inverno.mod.sql.vertx

The Inverno SQL client Vert.x implementation module provides SQL Client implementations on top of Vert.x pool and pooled client.

It also exposes a pool based Sql Client bean created using the module's configuration that can be used as is to query a RDBMS.

io.inverno.mod.web.base

The Inverno Web base module provides the foundational API as well as common services for Web client and server development, such as a data conversion service used to create inbound data decoder and outbound data encoder for respectively decoding and encoding HTTP or WebSocket payloads based on their media types.

It also defines common request parameter binding annotations for creating declarative Web client or Web server routes.

io.inverno.mod.web.client

The Inverno Web client module provides advanced features on top of the HTTP client module, including:

- path parameters

- exchange interceptors based on path, path pattern, HTTP method, request and response content negotiation including request and response content type and language of the response.

- seamless payload conversion from raw representation (arrays of bytes) to Java objects based on request or response content type as well as WebSocket subprotocol

- seamless parameter (path, cookie, header, query...) conversion from string to Java objects

- a complete set of annotations for creating declarative Web clients

Web clients can be created in a declarative way using annotations which are processed by the Inverno compiler to generate the Web client implementation and expose a corresponding bean in the module.

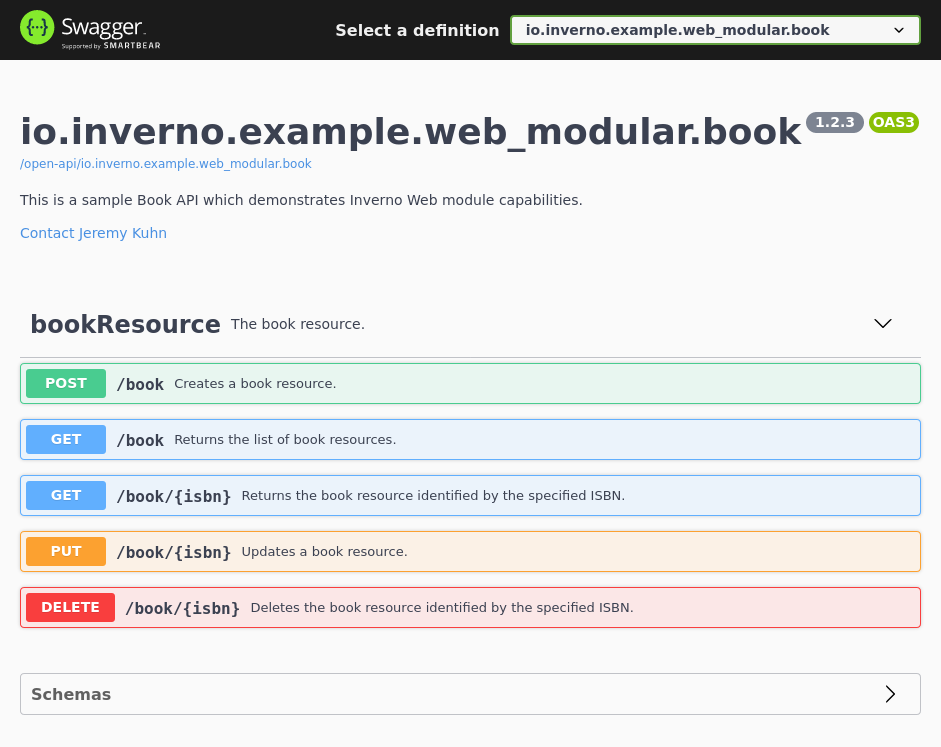

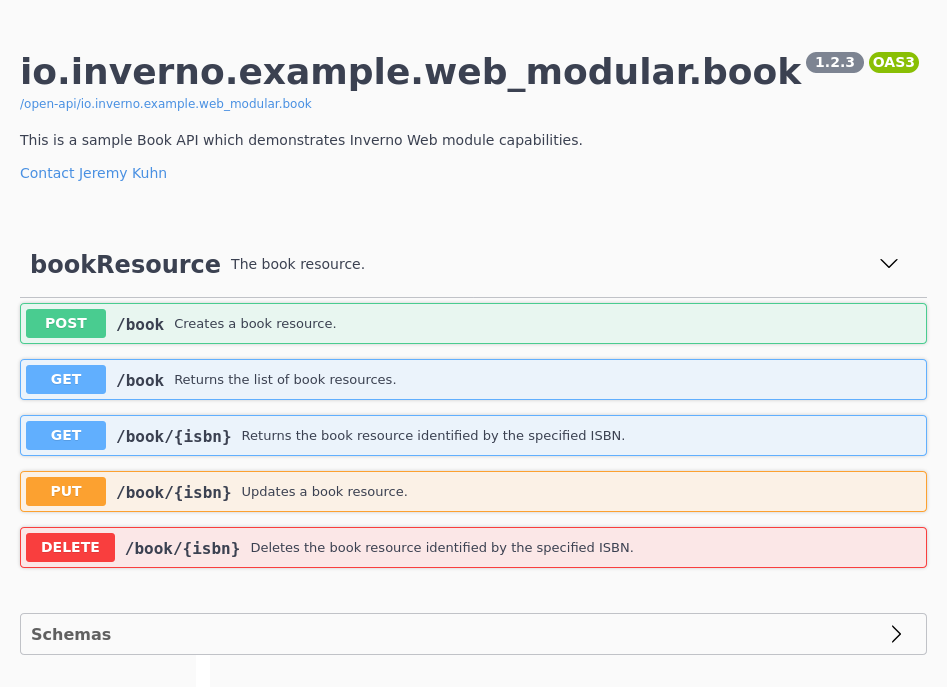

io.inverno.mod.web.server

The Inverno Web server module provides advanced features on top of the HTTP server module, including:

- path parameters

- request routing based on path, path pattern, HTTP method, request and response content negotiation including request and response content type and language of the response.

- exchange interceptors based on path, path pattern, HTTP method, request and response content negotiation including request and response content type and language of the response.

- transparent payload conversion from raw representation (arrays of bytes) to Java objects based on request or response content type as well as WebSocket subprotocol

- transparent parameter (path, cookie, header, query...) conversion from string to Java objects

- static resource handler to serve static resources from various location based on the resource API

- a complete set of annotations for creating declarative REST controllers

REST controllers can be created in a declarative way using annotations which are processed by the Inverno compiler to generate corresponding Web server routes and register them in the Web server. The compiler also performs some static checks to make sure routes are defined properly and that there are no conflicting routes.

Inverno Tools

The Inverno framework provides tools for running and building modular Java applications and Inverno applications in particular. It allows for instance to create native runtime and application images providing all the dependencies required to run a modular application. It is also possible to build Docker and OCI images, install them on a local Docker daemon or deploy them on remote registry.

Inverno Build Tools

The Inverno Build Tools is a Java module exposing an API for running, packaging and distributing fully modular applications.

Inverno Maven Plugin

The Inverno Maven Plugin is a Maven plugin based on the Inverno Build tools module which provides multiple goals to:

- run or debug a modular Java application project.

- start/stop a modular Java application during the build process to execute integration tests.

- build native runtime image containing a set of modules and their dependencies creating a light Java runtime.

- build native application image containing an application and all its dependencies into an easy to install platform dependent package (e.g.

.deb,.rpm,.dmg,.exe,.msi...). - build docker or OCI images of an application into a tarball, a Docker daemon or a remote container image registry.

The plugin requires JDK 15+ and Apache Maven 3.6.0 or later.

Inverno gRPC Protocol Buffer compiler plugin

The Inverno gRPC Protoc plugin is a Protocol Buffer plugin for generating Inverno gRPC client and server stubs from Protocol Buffer service definitions.

Inverno Distribution

The Inverno distribution provides a parent POM io.inverno.dist:inverno-parent and a BOM io.inverno.dist:inverno-dependencies for developing Inverno components and applications.

The parent POM inherits from the BOM which inherits from the Inverno OSS parent POM. It provides basic build configuration for building Inverno components and applications, including dependency management and plugins configuration. It especially includes configuration for the Inverno Maven plugin.

The BOM specifies the Inverno core and Inverno modules dependencies as well as OSS dependencies.

The Inverno distribution thus defines a consistent sets of dependencies and configuration for developing, building, packaging and distributing Inverno components and applications. Upgrading the Inverno framework version of a project boils down to upgrade the Inverno distribution version which is the version of the Inverno parent POM or the Inverno BOM.

Requirements

The Inverno framework requires JDK 21 or later and Apache Maven 3.6.0 or later.

The Inverno compiler (when displaying bean dependency cycles), the Inverno tools (when displaying the progress bar) and the standard application banner (displayed when bootstrapping an Inverno application) output Unicode characters which are supported out of the box by Linux or macOS terminals but unfortunately not by the Windows terminal for which the Unicode support must be enabled explicitly, this can be done in Regional Settings > Administrative > Change System Local > Use Unicode UTF-8 for worldwide language support. Another viable solution is to use the Git bash on Windows which also supports Unicode out of the box. Please note that this is purely cosmetic and has no impact on the applications.

Creating an Inverno project

The recommended way to start a new Inverno project is to create a Maven project which inherits from the io.inverno.dist:inverno-parent project, we might also want to add a dependency to io.inverno:inverno-core in order to create an Inverno module with IoC/DI:

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>io.inverno.dist</groupId>

<artifactId>inverno-parent</artifactId>

<version>1.13.0</version>

</parent>

<groupId>io.inverno.example</groupId>

<artifactId>sample-app</artifactId>

<version>1.0.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>io.inverno</groupId>

<artifactId>inverno-core</artifactId>

</dependency>

</dependencies>

</project>

That is all we need to develop, run, build, package and distribute a basic Inverno component or application. The Inverno parent POM provides dependency management and Java compiler configuration to invoke the Inverno compiler during the build process as well as Inverno tools configuration to be able to run and package the Inverno component or application.

If it is not possible to inherit from the Inverno parent POM, we can also declare the Inverno BOM io.inverno.dist:inverno-dependencies in the <dependencyManagement/> section to benefit from dependency management but loosing plugins configuration which must then be recovered from the Inverno parent POM.

<project>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>io.inverno.dist</groupId>

<artifactId>inverno-dependencies</artifactId>

<version>1.13.0</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

</project>

Inverno modules dependencies can be added in the <dependencies/> section of the project POM. For instance the following dependencies can be added to develop a REST microservice application:

<project>

<dependencies>

<dependency>

<groupId>io.inverno.mod</groupId>

<artifactId>inverno-boot</artifactId>

</dependency>

<dependency>

<groupId>io.inverno.mod</groupId>

<artifactId>inverno-web-server</artifactId>

</dependency>

</dependencies>

</project>

Please refer to the Inverno core documentation and Inverno modules documentation to learn how to develop with IoC/DI and how to use Inverno modules.

Developing a simple Inverno application

We can now start developing a sample REST application. An Inverno component or application is a regular Java module annotated with @io.inverno.core.annotation.Module, so the first thing we need to do is to create Java module descriptor module-info.java under src/main/java which is where Maven finds the sources to compile.

@io.inverno.core.annotation.Module

module io.inverno.example.sample_app {

requires io.inverno.mod.boot;

requires io.inverno.mod.web.server;

}

Note that we declared the io.inverno.mod.boot and io.inverno.mod.web.server module dependencies since we want to create a REST application, please refer to the Inverno modules documentation to learn more.

We then can create the main class of our sample REST application in src/main/java/io/inverno/example/sample_app/App.java:

package io.inverno.example.sample_app;

import io.inverno.core.annotation.Bean;

import io.inverno.core.v1.Application;

import io.inverno.mod.base.resource.MediaTypes;

import io.inverno.mod.web.server.annotation.WebController;

import io.inverno.mod.web.server.annotation.WebRoute;

@Bean

@WebController

public class App {

@WebRoute( path = "/message", produces = MediaTypes.TEXT_PLAIN)

public String getMessage() {

return "Hello, world!";

}

public static void main(String[] args) {

Application.with(new Sample_app.Builder()).run();

}

}

Configuring logging

Inverno framework is using Log4j 2 for logging, Inverno application logging can be activated by adding the dependency to org.apache.logging.log4j:log4j-core:

<project>

<dependencies>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

</dependency>

</dependencies>

</project>

If you don't include this dependency at runtime, Log4j falls back to the

SimpleLoggerimplementation provided with the API and configured usingorg.apache.logging.log4j.simplelog.*system properties. The log level can then be configured by setting-Dorg.apache.logging.log4j.simplelog.level=INFOsystem property when running the application.

Log4j 2 provides a default configuration with a default root logger level set to ERROR, resulting in no info messages being output when starting an application. This can be changed by setting -Dorg.apache.logging.log4j.level=INFO system property when running the application.

However, the recommended way is to provide a specific log4j2.xml logging configuration file in the project resources under src/main/resources:

<?xml version="1.0" encoding="UTF-8"?>

<Configuration xmlns="http://logging.apache.org/log4j/2.0/config"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://logging.apache.org/log4j/2.0/config https://raw.githubusercontent.com/apache/logging-log4j2/rel/2.14.0/log4j-core/src/main/resources/Log4j-config.xsd"

status="WARN" shutdownHook="disable">

<Appenders>

<Console name="LogToConsole" target="SYSTEM_OUT">

<PatternLayout pattern="%d{DEFAULT} %highlight{%-5level} [%t] %c{1.} - %msg%n%ex"/>

</Console>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="LogToConsole"/>

</Root>

</Loggers>

</Configuration>

Note that the Log4j shutdown hook must be disabled so as not to interfere with the Inverno application shutdown hook, if it is not disabled, application shutdown logs might be dropped.

We could have chosen to provide a default logging configuration in the Inverno framework itself, but we preferred to stick to standard Log4j 2 configuration rules in order to keep things simple so please refer to the Log4j 2 configuration documentation to learn how to configure logging.

Running the application

The application is now ready and can be run using the inverno:run goal:

$ mvn inverno:run

...

[INFO] --- inverno:1.6.0:run (default-cli) @ sample-app ---

2025-01-06 13:55:28,417 INFO [main] i.i.c.v.Application - Inverno is starting... ] Running project...

╔════════════════════════════════════════════════════════════════════════════════════════════╗

║ , ~~ , ║

║ , ' /\ ' , ║

║ , __ \/ __ , _ ║

║ , \_\_\/\/_/_/ , | | ___ _ _ ___ __ ___ ___ ║

║ , _\_\/_/_ , | | / _ \\ \ / // _ \ / _|/ _ \ / _ \ ║

║ , __\_/\_\__ , | || | | |\ \/ /| __/| | | | | | |_| | ║

║ , /_/ /\/\ \_\ , |_||_| |_| \__/ \___||_| |_| |_|\___/ ║

║ , /\ , ║

║ , \/ , -- 1.6.0 -- ║

║ ' -- ' ║

╠════════════════════════════════════════════════════════════════════════════════════════════╣

║ Java runtime : OpenJDK Runtime Environment ║

║ Java version : 21.0.2+13-58 ║

║ Java home : /home/jkuhn/Devel/jdk/jdk-21.0.2 ║

║ ║

║ Application module : io.inverno.example.sample_app ║

║ Application version : 1.0.0-SNAPSHOT ║

║ Application class : io.inverno.example.sample_app.App ║

║ ║

║ Modules : ║

║ * ... ║

╚════════════════════════════════════════════════════════════════════════════════════════════╝

2025-01-06 13:55:28,428 INFO [main] i.i.e.s.Sample_app - Starting Module io.inverno.example.sample_app...

2025-01-06 13:55:28,429 INFO [main] i.i.m.b.Boot - Starting Module io.inverno.mod.boot...

2025-01-06 13:55:28,652 INFO [main] i.i.m.b.Boot - Module io.inverno.mod.boot started in 222ms

2025-01-06 13:55:28,652 INFO [main] i.i.m.w.s.Server - Starting Module io.inverno.mod.web.server...

2025-01-06 13:55:28,653 INFO [main] i.i.m.h.s.Server - Starting Module io.inverno.mod.http.server...

2025-01-06 13:55:28,653 INFO [main] i.i.m.h.b.Base - Starting Module io.inverno.mod.http.base...

2025-01-06 13:55:28,658 INFO [main] i.i.m.h.b.Base - Module io.inverno.mod.http.base started in 4ms

2025-01-06 13:55:28,670 INFO [main] i.i.m.w.b.Base - Starting Module io.inverno.mod.web.base...

2025-01-06 13:55:28,670 INFO [main] i.i.m.h.b.Base - Starting Module io.inverno.mod.http.base...

2025-01-06 13:55:28,671 INFO [main] i.i.m.h.b.Base - Module io.inverno.mod.http.base started in 0ms

2025-01-06 13:55:28,672 INFO [main] i.i.m.w.b.Base - Module io.inverno.mod.web.base started in 1ms

2025-01-06 13:55:28,724 INFO [main] i.i.m.h.s.i.HttpServer - HTTP Server (nio) listening on http://0.0.0.0:8080

2025-01-06 13:55:28,725 INFO [main] i.i.m.h.s.Server - Module io.inverno.mod.http.server started in 72ms

2025-01-06 13:55:28,725 INFO [main] i.i.m.w.s.Server - Module io.inverno.mod.web.server started in 72ms

2025-01-06 13:55:28,779 INFO [main] i.i.e.s.Sample_app - Module io.inverno.example.sample_app started in 358ms

2025-01-06 13:55:28,779 INFO [main] i.i.c.v.Application - Application io.inverno.example.sample_app started in 411ms

We can now test the application:

$ curl http://127.0.0.1:8080/message

Hello, world!

The application can be gracefully shutdown by pressing Ctrl-c.

Packaging the application image

In order to create a native image containing the application and all its dependencies including JDK's dependencies, we can simply invoke the inverno:package-app goal:

$ mvn inverno:package-app

...

[INFO] --- inverno:1.6.0:package-app (default-cli) @ sample-app ---

[INFO] Building application image: /home/jkuhn/Devel/git/frmk/io.inverno.example.sample-app/target/maven-inverno/application_linux_amd64/sample-app-1.0.0-SNAPSHOT...

[═════════════════════════════════════════════ 69 % ═══════════════> ] Packaging project application...

This uses

jpackagetool which is an incubating feature in JDK<16, if you intend to build an application image with an old JDK, you'll need to explicitly add thejdk.incubator.jpackagemodule inMAVEN_OPTS:$ export MAVEN_OPTS="--add-modules jdk.incubator.jpackage"

This will create a ZIP archive containing a native application distribution target/sample-app-1.0.0-SNAPSHOT-application_linux_amd64.zip which will be deployed to the local Maven repository and eventually to a remote Maven repository.

Then in order to install the application on a compatible platform, we just need to download the archive corresponding to the platform, extract it to some location and run the application. Luckily for us this can be done quite easily with Maven dependency plugin:

$ mvn dependency:unpack -Dartifact=io.inverno.example:sample-app:1.0.0-SNAPSHOT:zip:application_linux_amd64 -DoutputDirectory=./

...

$ ./sample-app-1.0.0-SNAPSHOT/bin/sample-app

...

It is also possible to package platform specific application distribution in .deb or .msi package formats and/or zip archive format by defining particular package and/or archive formats in the Inverno Maven plugin configuration:

<project>

<build>

<plugins>

<plugin>

<groupId>io.inverno.tool</groupId>

<artifactId>inverno-maven-plugin</artifactId>

<executions>

<execution>

<id>package-app</id>

<phase>package</phase>

<goals>

<goal>package-app</goal>

</goals>

<configuration>

<packageTypes>

<packageType>deb</packageType>

</packageTypes>

<archiveFormats>

<archiveFormat>zip</archiveFormat>

</archiveFormats>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

$ mvn install

...

Note that there is no cross-platform support and a given platform specific format must be built on the platform it runs on.

Such platform-specific package can then be downloaded and installed using the right package manager:

$ mvn dependency:copy -Dartifact=io.inverno.example:sample-app:1.0.0-SNAPSHOT:deb:application_linux_amd64 -DoutputDirectory=./

...

$ sudo dpkg -i sample-app-1.0.0-SNAPSHOT-application_linux_amd64.deb

...

Inverno Core

Motivation

Inversion of Control and Dependency Injection principles are not new and many Java applications have been developed following these principles over the past two decades using frameworks such as Spring, CDI, Guice... however these recognized solutions might have some issues in practice especially with the way Java has evolved and how applications should be developed nowadays.

Dependency injection errors like a missing dependency or a cycle in the dependency graph are often reported at runtime when the application is started. Most of the time these issues are easy to fix but when considering big applications split into multiple modules developed by different people, it might become more complex. In any case you can't tell for sure if an application will start before you actually start it.

Most IoC/DI frameworks are black boxes, often considered as magical because one gets beans instantiated and wired altogether without understanding what just happened, and it is indeed quite hard to figure out how it actually works. This is not a problem as long as everything works as expected, but it can become one when you actually need to troubleshoot a failing application.

Beans instantiation and wiring are done at runtime using Java reflection which offers all the advantages of Java dynamic linking at the expense of some performance overhead. Classpath scanning, instantiation and wiring process indeed takes some time and prevents just-in-time compilation optimization making application startup quite slow.

Although IoC frameworks make the development of modular applications easier, they often require a rigorous methodology to make it the right way. For instance, you must know precisely what components are provided and/or required by all the modules composing an application and make sure one doesn't provide a component that might interfere with another.

These points are very high level, please have a look at this article if you like to learn more about the general ideas behind the Inverno framework. The Inverno framework proposes a new approach of IoC/DI principles consistent with latest developments of the Java™ platform and perfectly adapted to the development of modern applications in Java.

Prerequisites

In this documentation, we’ll assume that you have a working knowledge of Inversion of Control and Dependency Injection principles as well as Object-Oriented Programming.

Overview

The Inverno framework is different in many ways and tries to address previous issues. Its main difference is that it doesn't rely on Java reflection at all to instantiate the beans composing an application (IoC) and wire them together (DI), this is actually done by a class generated by the Inverno compiler at compile time.

Since beans and their dependencies are determined at compile time, errors can be raised precisely when they make sense during development or at build time.

There is also no need for complex runtime libraries since the complexity is handled by the compiler which generates a readable class providing only what is required at runtime. This presents two advantages, first applications have a small footprint and start fast since most of the processing is already done and no reflection is involved. Secondly you will be able to actually debug all parts of your application since nothing is hidden behind a complex library, you can actually see when the beans are instantiated with the new operator opening rooms to other compile and runtime optimization as well.

The framework also fully embraces the modular system introduced in Java 9 which basically imposes to develop with modularity in mind. An Inverno module only exposes the beans that must be exposed to other modules, and it clearly indicates the beans it requires to operate. All this makes modular development safer, clearer and more natural.

Modules and Beans

Inversion of control and dependency injection principles have proven to be an elegant and efficient way to create applications in an Object-Oriented Programming language. A Java application basically consists in a set of interconnected objects.

An Inverno application adds a modular dimension to these principles, the objects or the beans composing the application are created and connected in one or more isolated modules which are themselves composed in the application.

A module encapsulates several beans and possibly other modules. It specifies the dependencies it needs to operate and only exposes the beans that need to be exposed from the module perspective. As a result it is isolated from the rest of the application, it is unaware of how and where it is used, and it actually doesn't care as long as its requirements are met. It really resembles a class which makes it very familiar to use.

A bean is a component of a module and more widely an application. It has required and optional dependencies provided by the module when a bean instance is created.

The Inverno compiler is an annotation processor which runs inside the Java compiler and generates module classes based on Inverno annotations found on the modules and classes being compiled.

Java module system

Before you can create your first Inverno module, you must first understand what a Java module is and how it might change your life as a Java developer. If you are already familiar with it, you can skip that section and go directly to the project setup section.

The Java module system has been introduced in Java 9 mostly to modularize the overgrowing Java runtime library which is now split into multiple interdependent modules loaded when you need them at runtime or compile time. This basically means that the size of the Java runtime you need to compile and/or run your application now depends on your application's needs which is a pretty big improvement.

If you know OSGI or Maven already, you might say that modules have existed in Java for a long time, but now they are fully integrated into the language, the compiler and the runtime. You can create a Java module, specify what packages are exposed and what dependencies are required and the good part is that both the compiler and JVM tell you when you do something wrong being as close as possible to the code, there’s no more xml or manifest files to care about.

So how do you create a Java module? There is plenty of documentation you can read to have a complete and deep understanding of the Java module system, here we will only explain what you need to know to develop regular Inverno modules.

A Java module is specified in a module-info.java file located in the default package. Let's assume you want to create module io.inverno.example.sample, you can create the following file structure:

src

└── io.inverno.example.sample

├── io

│ └── inverno

│ └── example

│ └── sample

│ ├── internal

│ │ └── ...

│ └── ...

└── module-info.java

This is one way to organize the code, the only important thing is to put the

module-info.javadescriptor in the default package.

Now let's have a closer look at the module descriptor:

module io.inverno.example.sample { // 1

exports io.inverno.example.sample; // 2

}

- A module is declared using a familiar syntax starting with the

modulekeyword followed by the name of the module which must be a valid Java name. - The

io.inverno.example.samplemodule exports theio.inverno.example.samplepackage which means that other modules can only access public types contained in that package. Any type defined in another package within that module is only visible from within the module following usual Java visibility rules (default, public, protected, private). This basically defines a new level of encapsulation at module level. For instance, types in packageio.inverno.example.sample.internalare not accessible to other modules regardless of their visibility.

Now let's say you need to use some external types defined and exported in another module io.inverno.example.other:

src

├── io.inverno.example.sample

│ ├── ...

│ └── module-info.java

└── io.inverno.example.other

├── ...

└── module-info.java

If you try to reference any of these types in io.inverno.example.sample module as is the compiler will complain with explicit visibility errors unless you specify that io.inverno.example.sample module requires io.inverno.example.other module:

module io.inverno.example.sample {

requires io.inverno.example.other;

exports io.inverno.example.sample;

}

You should now be able to reference any public types defined in a package exported in io.inverno.example.other module.

The modular system has also changed the way Java applications are built and run. Before we used to specify a classpath listing the locations where the Java compiler and the JVM should look for application's classes whereas now we should specify a module path listing the locations of modules and forget about the classpath.

If we consider previous modules, they are compiled and run as follows:

> javac --module-source-path src -d jmods --module io.inverno.example.sample --module io.inverno.example.other

> java --module-path jmods/ --module io.inverno.example.sample/io.inverno.example.sample.Sample

There are other subtleties like transitive dependencies, service providers or opened modules and cool features like jmod packaging and the jlink tool but for now that's pretty much all you need to know to develop Inverno modules which are basically instrumented Java modules.

You should now have a basic understanding of how an Inverno application is built and what Java technologies are involved. An Inverno application results from the composition of multiple isolated modules which create and wire the beans making up the application. Almost everything is done at compile time when module classes are generated.

Project Setup

Maven

The easiest way to set up an Inverno module project is to start by creating a regular Java Maven project which inherits from io.inverno.dist:inverno-parent project and depends on io.inverno:inverno-core:

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>io.inverno.dist</groupId>

<artifactId>inverno-parent</artifactId>

<version>1.13.0</version>

</parent>

<groupId>io.inverno.example</groupId>

<artifactId>sample</artifactId>

<version>1.0.0-SNAPSHOT</version>

...

<dependencies>

...

<dependency>

<groupId>io.inverno</groupId>

<artifactId>inverno-core</artifactId>

</dependency>

...

</dependencies>

...

</project>

Then you have to add a module descriptor to make it a Java module project. An Inverno module requires io.inverno.core and io.inverno.core.annotation modules. If you want your module to be used in other modules it must also export the package where the module class is generated by the Inverno compiler which is the module name by default. Remember that an Inverno module is materialized in a regular Java class subject to the same rules as any other class in a Java module.

module io.inverno.example.sample {

requires io.inverno.core;

requires io.inverno.core.annotation;

exports io.inverno.example.sample;

}

If you do not want your project to inherit from io.inverno.dist:inverno-parent project, you'll have to explicitly specify compiler source and target version (>=9), dependencies version and configure the Maven compiler plugin to invoke the Inverno compiler.

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>io.inverno.example</groupId>

<artifactId>sample</artifactId>

<version>1.0.0-SNAPSHOT</version>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<maven.compiler.source>11</maven.compiler.source>

<maven.compiler.target>11</maven.compiler.target>

<version.inverno>1.6.0</version.inverno>

<version.inverno.dist>1.13.0</version.inverno.dist>

</properties>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>io.inverno.dist</groupId>

<artifactId>inverno-dependencies</artifactId>

<version>${version.inverno.dist}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>io.inverno</groupId>

<artifactId>inverno-core</artifactId>

</dependency>

</dependencies>

<build>

<plugins>

...

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<annotationProcessorPaths>

<path>

<groupId>io.inverno</groupId>

<artifactId>inverno-core-compiler</artifactId>

<version>${version.inverno}</version>

</path>

</annotationProcessorPaths>

</configuration>

</plugin>

...

<plugin>

</build>

</project>

An Inverno module is built just as a regular Maven project using maven commands (compile, package, install...). The module class is generated and compiled during the compile phase and included in the resulting JAR file during the package phase. If anything related to IoC/DI goes wrong during compilation, the compilation fails with explicit compilation errors reported by the Inverno compiler.

Gradle

Since version 6.4, it is also possible to use Gradle to build Inverno module projects. Here is a sample build.gradle file:

plugins {

id 'application'

}

repositories {

mavenCentral()

}

dependencies {

implementation 'io.inverno:inverno-core:1.6.0'

annotationProcessor 'io.inverno:inverno-core-compiler:1.6.0'

}

java {

modularity.inferModulePath = true

sourceCompatibility = JavaVersion.VERSION_11

targetCompatibility = JavaVersion.VERSION_11

}

application {

mainModule = 'io.inverno.example.hello'

mainClassName = 'io.inverno.example.hello.App'

}

Bean

As you already know, a Java application can be reduced to the composition of objects working together. In an Inverno application, these objects are instantiated and injected into each other by one or more modules. Inside a module, a bean basically specifies what it needs to create a bean instance (DI) and how to obtain it (IoC).

A bean and a bean instance are two different things that should not be confused. A bean can result in multiple bean instances in the application whereas a bean instance always refers to exactly one bean. A bean is like a plan used to create instances.

A bean is fully identified by its name and the module in which it resides. The following notation is used to represent a bean qualified name: [MODULE]:[BEAN]. As a consequence, two beans with the same name cannot exist in the same module, but it is safe to have multiple beans with the same name in different modules.

Module Bean

Module bean is the primary type of beans you can create in an Inverno module. It is defined by a concrete class annotated with the @Bean annotation.

import io.inverno.core.annotation.Bean;

@Bean

public class SomeBean {

...

}

In the previous code we created a bean of type SomeBean. At compile time, the Inverno compiler will include it in the generated module class that you'll eventually use at runtime to obtain SomeBean instances.

By default, a bean is named after the simple name of the class starting with a lower case (e.g. someBean in our previous example). This can be specified in the annotation using the name attribute:

@Bean(name="customSomeBean")

public class SomeBean {

...

}

Wrapper Bean

A wrapper bean is a particular form of bean used to define beans whose code cannot be instrumented with Inverno annotations or that require more complex logic to create the instance. This is especially the case for legacy code or third party libraries.

A wrapper bean is defined by a concrete class annotated with both @Bean and @Wrapper annotations which basically wraps the actual bean instance and include the instantiation, initialization and destruction logic. It must implement the Supplier<E> interface which specifies the actual type of the bean as formal parameter.

@Wrapper

@Bean

public class SomeWrapperBean implements Supplier<SomeLegacyBean> {

private SomeLegacyBean instance;

public SomeWrapperBean() {

// Creates the wrapped instance

this.instance = ...

}

SomeLegacyBean get() {

// Returns the wrapped instance

return this.instance;

}

...

}

In the previous code we created a bean of type SomeLegacyBean. One instance of the wrapper class is used to create exactly one bean instance, and it lives as long as the bean instance is referenced.

Since a wrapper bean is annotated with @Bean annotation, it can be configured in the exact same way as a module bean except that it only applies to the wrapper instance which is responsible to configure the actual bean instance. The wrapper instance is never exposed, only the actual bean instance wrapped in it is exposed. As for module beans, SomeLegacyBean instances can be obtained using the generated Module class.

Note that since a new wrapper instance is created every time a new bean instance is requested, a wrapper class is not required to return a new or distinct result in the

get()method, nonetheless a wrapper instance is used to create, initialize and destroy exactly one instance of the supplied type and as a result it is good practice to have the wrapper instance always return the same bean instance. This is especially true and done naturally when initialization or destruction methods are specified.

When designing a prototype wrapper bean, particular care must be taken to make sure the wrapper does not hold a strong reference to the wrapped instance in order to prevent memory leak when a prototype bean instance is requested by the application. It is strongly advised to rely on

WeakReference<>in that particular use case.

Nested Bean

A nested bean is, as its name suggests, a bean inside a bean. A nested bean instance is obtained by invoking a particular method on another bean instance. Instances thus obtained participate in dependency injection but unlike other types of bean they do not follow any particular lifecycle or strategy, the implementor of the nested bean method is free to decide whether a new instance should be returned on each invocation.

A nested bean is declared in the class of a bean, by annotating a non-void method with no arguments with @NestedBean annotation. The name of a nested bean is given by the name of the bean providing the instance and the name of the annotated method following this notation: [MODULE]:[BEAN].[METHOD_NAME].

@Bean

public class SomeBean {

...

@NestedBean

public SomeNestedBean nestedBean() {

...

}

}

It is also possible to cascade nested beans.

Mutator Bean

A mutator bean allows to mutate a bean instance injected through a socket bean generated by the Inverno compiler. It is essentially a mutating socket bean with the ability to operate or even replace the injected instance before it is actually wired to the module beans. It is typically used for setting up, instrumenting, decorating or completely transforming an external dependency in order to make it usable by the module beans.

A mutator bean must be defined as class implementing Function<T, R> where <T> represents the socket type, basically the type of the instance to inject in the module, and <R> represents the type of the bean exposed in the module.

@Mutator

@Bean

public class SomeMutatorBean implements Function<ExternalType, InternalType> {

@Override

public InternalType apply(ExternalType instance) {...}

}

In above example, the compiled module exposes a socket for injecting a ExternalType instance which is transformed to InternalType by the mutator bean when the module is started. Beans inside the module can then define sockets for injecting the resulting InternalType instance.

By default, the Inverno compiler creates a regular socket bean which is simply ignored when it is not wired and made optional when it is only wired to optional sockets. This behaviour can be changed with the required attribute in order to force the creation of a required socket bean and make sure an instance is always injected and the mutator invoked.

@Mutator( required = true )

@Bean

public class SomeMutatorBean implements Function<ExternalType, InternalType> {

@Override

public InternalType apply(ExternalType instance) {...}

}

Being able to force the creation of a required socket bean and the execution of the mutator allows to modify an external object using beans or resources from inside the module which can be particularly useful in some applications composing multiple modules, each of which populates a global object.

Overridable

A module bean or a wrapper bean can be declared as overridable which allows to override the bean inside the module by a socket bean of the same type.

An overridable bean is defined as a module bean or a wrapper bean whose class has been annotated with @Overridable. This basically tells the Inverno compiler to create an extra optional socket bean with the particular feature of being able to take over the bean when an instance is provided on module instantiation.

@Overridable

@Bean

public class SomeBean {

}

Lifecycle

All bean instances follow the subsequent lifecycle in a module:

- A bean instance is created

- It is initialized

- It is active

- It is "eventually" destroyed

Let's examine each of these steps in details.

A bean instance is always created in a module, when a bean instance is created greatly depends on the context in which it is used, it can be created when a module instance is started or when it is required in the application. In order to create a bean instance the module must provide all the dependencies required by the bean. After that it sets any optional dependencies available on the instance thus obtained. This is actually when and where dependency injection takes place, this aspect will be covered more in details in following sections, for now all you have to know is that when requested the module creates a fully wired bean instance.

After that the module invokes initialization methods on the bean instance to initialize it. An initialization method is declared on the bean class using the @Init annotation:

@Bean

public class SomeBean {

@Init

public void init() {

...

}

}

You can specify multiple initialization methods but the order in which they are invoked is undetermined. Inheritance is not considered here, only the methods annotated on the bean class are considered. Bean initialization is useful when you want to execute some code after dependency injection to make the bean instance fully functional (e.g. initialize a connection pool, start a server socket...).

After that, the bean instance is active and can be used either directly by accessing it from the module or indirectly through another bean instance where it has been injected.

A bean instance is "eventually" destroyed, typically when its enclosing module instance is stopped. Just as you specified initialization methods, you can specify destruction methods to be invoked when a bean instance is destroyed using the @Destroy annotation:

@Bean

public class SomeBean {

@Destroy

public void destroy() {

...

}

}

As for initialization methods, you can specify multiple destruction methods but the order in which they are invoked is undetermined and inheritance is also not considered. Bean destruction is useful when you need to free resources that have been allocated by the bean instance during application operation (e.g. shutdown a connection pool, close a server socket...).

In case of wrapper beans, the initialization and destruction of a bean instance is delegated to the initialization and destruction methods specified on the wrapper bean which respectively initialize and destroy the actual bean instance wrapped in the wrapper bean.

@Bean

@Wrapper

public class SomeWrapperBean implements Supplier<SomeLegacyBean> {

private SomeLegacyBean instance;

public SomeWrapperBean() {

// Creates the wrapped instance

this.instance = ...

}

@Init

public void init() {

// Initialize the wrapped instance

this.instance.start();

}

@Destroy

public void destroy() {

// Destroy the wrapped instance

this.instance.stop();

}

...

}

We stated here that all bean instances are eventually destroyed but this is actually not always the case. Depending on the bean strategy and the context in which it is used, it might not be destroyed at all, hopefully workarounds exist to make sure a bean instance is always properly destroyed. We'll cover this more in detail when we'll describe bean strategies.

Visibility

A bean can be assigned a public or private visibility. A public bean is exposed by the module to the rest of the application whereas a private bean is only visible from within the module.

Bean visibility is set in the @Bean annotation in the visibility attribute:

@Bean(visibility=Visibility.PUBLIC)

public class SomeBean {

}

Strategy

A bean is always defined with a particular strategy which controls how a module should create a bean instance when one is requested, either during dependency injection when a module requires a bean instance to inject in another bean instance or during application operation when some application code requests a bean instance to a module instance.

Singleton

The singleton strategy is the default strategy used when no explicit strategy is specified on a bean class. An Inverno module only creates one single instance for a singleton bean. That same instance is returned every time an instance of that bean is requested. It is then shared among all dependent beans through dependency injection and also the application if it has requested an instance.

A singleton bean is specified explicitly by setting the strategy attribute to Strategy.SINGLETON in the @Bean annotation:

@Bean(strategy = Strategy.SINGLETON)

public class SomeSingletonBean {

}

Modules easily support the bean lifecyle for singleton beans since a module instance holds singleton bean instances by design, they can then be properly destroyed when the module instance is stopped.

Particular care must be taken when a singleton bean instance is requested to a module instance by the application as the resulting reference will point to a managed instance which will be destroyed when the module instance is stopped leaving the instance referenced in the application in an unpredictable state.

A singleton bean is the basic building block of any application which explains why it is the default strategy. An application is basically made of multiple long living components rather than volatile disposable components. A server is a typical example of singleton bean, it is created when the application is started, initialized to accept requests and destroyed when the application is stopped.

A singleton instance is held by exactly one module instance, if you instantiate a module twice, you'll get two singleton bean instances, one in the first module instance and the other in the second module instance. This basically differs from the standard singleton pattern, you'll see more in detail why this actually matters when we'll describe composite modules.

Prototype

A prototype bean results in the creation of as many instances as requested. All dependent beans in the module get a different bean instance and each time a bean instance is requested to a module instance by the application a new instance is also created.

A prototype bean is specified by setting the strategy attribute to Strategy.PROTOTYPE in the @Bean annotation:

@Bean(strategy = Strategy.PROTOTYPE)

public class SomeBean {

}

Unlike singleton beans, modules can't always fully support the bean lifecycle for prototype beans. All prototype beans instances are kept in the module instance in order to destroy them when it is stopped. Modules use weak references to prevent memory leaks so that dereferenced instances are automatically removed from the internal registry when the garbage collector reclaims them. This works well for prototype bean instances injected into singleton bean instances since they are actually referenced until the module instance is stopped just like any singleton bean instance. It becomes tricky when a prototype bean instance is requested by the application. In that case, the prototype bean instance is removed from the module instance when it is dereferenced from the application and reclaimed by the garbage collector leaving no chance for the module instance to destroy it properly. The actual behavior is more subtle because a dereferenced prototype bean instance might actually be destroyed when a module is stopped before the instance is reclaimed by the garbage collector.

As a result, it is not recommended to define destruction methods on a prototype bean but if you really need to, you can make your bean implement AutoClosable, specify the close() method as the unique destruction method and request prototype bean instances from the application using a try-with-resources block:

@Bean(strategy = Strategy.PROTOTYPE)

public class SomeBean implements AutoCloseable {

@Destroy

public void close() throws Exception {

...

}

}

Then when requesting a prototype bean instance from the application:

try(SomeBean bean = module.someBean()) {

...

}

As soon as the program exits the try-with-resources block the bean instance is properly destroyed, then dereferenced and eventually reclaimed by the garbage collector and finally removed from the module instance. However, you should make sure that the close() method can be called twice since it actually might.

Prototype beans should be used whenever there is a need to hold a state in a particular context. An HTTP client is a typical example of a stateful instance, different instances should be created and injected in singleton beans so they can deal with concurrency independently to make sure requests are sent only after a response to the previous request has been received.

That might not be the smartest way to use HTTP clients in an application, but it gives you the idea.

Prototype beans can also be used to implement the factory pattern, just like a factory, you can request new bean instances on a module. Inverno framework makes this actually very powerful since there's no runtime overhead, modules can be created and used anywhere, and you never have to worry about the boilerplate code that instantiates the bean since it is generated for you by the framework.

Module

An Inverno module can be seen as an isolated collection of beans. The role of a module is to create and wire bean instances in order to expose logic to the application.

In practice, a module is materialized by the class generated by the Inverno compiler during compilation and which results from the processing of Inverno annotations.

A module is isolated from the rest of the application through its module class which clearly defines the beans exposed by the module and what it needs to operate. As a result, a module doesn’t care when and how it is used in an application as long as its requirements are met.

Isolation is actually what makes the Inverno framework so special as it greatly simplifies the development of complex modular applications.

A module is defined as a regular Java module annotated with the @Module annotation:

@Module

Module io.inverno.sample.sampleModule {

...

}

The module class

Java modules annotated with @Module will be processed by the Inverno compiler at compile time. The Inverno compiler generates one module class per module providing all the code required at runtime to create and wire bean instances.

This class is the entry point of a module and serve several purposes:

- encapsulate beans instances creation and wiring logic

- implement bean instance lifecycle

- specify required or optional module dependencies

- expose public beans

- hide private beans

- guarantee a proper isolation of the module within the application

This regular Java class can be instantiated like any other class. It relies on a minimal runtime library barely visible which makes it self-describing and very easy to use.

Let’s see how it looks like for the io.inverno.sample.sampleModule module and SomeBean bean, the module class would be used as follows:

SampleModule module = new SampleModule.Builder().build(); // 1

module.start(); // 2

SomeBean someBean = module.someBean(); // 3

// Do something useful with someBean

module.stop(); // 4

- The

SampleModuleclass is instantiated - The module is started

- The

SomeBeaninstance is retrieved - Eventually the module is stopped

There are two important things to notice here, first you control when, where and how many times you want to instantiate a module, which brings great flexibility in the way modules are used in your application. For instance integrating an Inverno module in an existing code is pretty straightforward as it is plain old Java, it is also possible to create and use a module instance during application operation (e.g. when processing a request). Secondly beans are exposed with their actual types through named methods which eventually produces more secure code because static type checking can (finally) be performed by the compiler.

Module classes provide dedicated builders to facilitate the creation of complex modules instances with multiple required and optional dependencies.

By default, the module class is named after the last identifier of the module name and generated in a package named after the module. The full class name can be specified in the annotation using the className attribute:

@Module(className=”io.inverno.sample.CustomSampleModule”)

Module io.inverno.sample.sampleModule {

...

}

The module class is like any other class in the module, if you want to use it outside the module you have to explicitly export its package in the module descriptor:

@Module

Module io.inverno.sample.sampleModule {

exports io.inverno.sample.sampleModule;

}

Most of the time this is something you’ll do especially if you want to create composite modules. However, if you only use the module class from within the module, typically in a main method or embedded in some other class, you won’t have to do it.